Apple's MM1 Revolution: Paving the Way for a Smarter, Next-Gen Siri Experience

Discover how Apple's new MM1 model, a multimodal large language model, is set to revolutionize Siri with superior prompt understanding and efficiency. Learn about the future of AI in Apple's ecosystem.

Faheem Hassan

3/20/20242 min read

Unlock the Power of AI: How to Enhance Your Google Slides with Gemini Integration

Apple Unveils MM1: The Future Engine Behind Next-Generation Siri

In a groundbreaking development, Apple has introduced the MM1, a new family of multimodal large language models (MLLMs), poised to redefine the capabilities of virtual assistants. Detailed in a recent research paper by Apple's team, the MM1 model emerges as a significant leap forward in artificial intelligence, potentially setting the stage for the next iteration of Siri, Apple's virtual assistant.

A New Approach to Multimodal AI

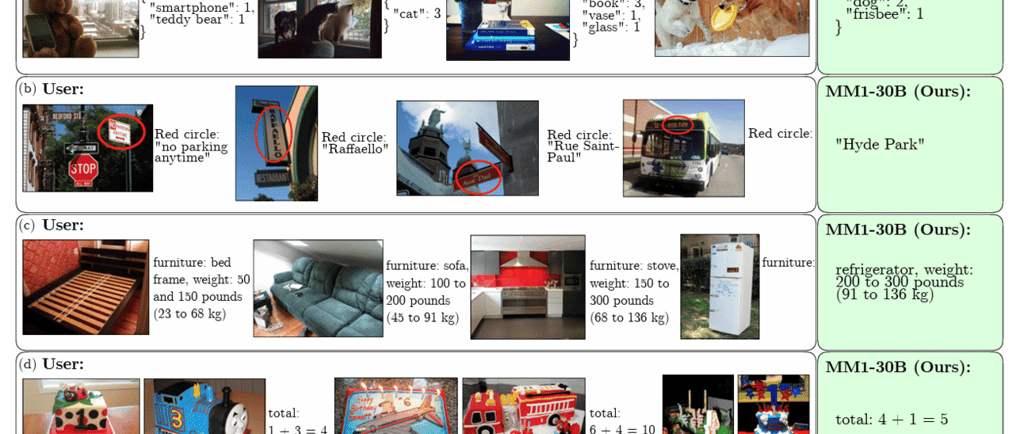

The research paper presents a novel methodology for training multimodal models, which are capable of understanding and processing multiple types of data, such as images and text. This approach leverages synthetic data to enhance the model's learning process, enabling it to grasp complex queries with greater accuracy.

Compact Yet Powerful

Despite its relatively modest size of 30 billion parameters—a stark contrast to the trillion-parameter giants like GPT-4 and Claude 3 Opus—MM1 demonstrates remarkable capabilities. Apple's researchers assert that MM1 can compete with these larger models on key benchmarks. One of the standout features of MM1 is its superior prompt understanding, which significantly reduces the need for follow-up prompts to achieve accurate results. This efficiency in understanding and processing user queries could revolutionize how users interact with AI, making interactions more fluid and intuitive.

The Future of Siri

While the research paper stops short of directly mentioning Siri, the implications of MM1's capabilities for Apple's virtual assistant are unmistakable. The focus on performance, prompt efficiency, and multimodal understanding suggests that MM1 could be the driving force behind a more advanced and versatile Siri. This aligns with Apple's ongoing efforts to enhance Siri's functionality, making it a more integral and capable part of the Apple ecosystem.

Gemini and the AI Horizon

Adding to the anticipation, recent reports from Bloomberg hint at Apple's plans to introduce Gemini to the iPhone, signaling a significant AI initiative. This, coupled with the CEO's promises of an AI breakthrough, suggests that Apple is on the cusp of delivering an unparalleled AI experience to its users.

Conclusion

Apple's MM1 model represents a pivotal advancement in the field of artificial intelligence, with the potential to power the next generation of Siri. Its innovative approach to multimodal learning, combined with its efficiency and compact size, sets a new standard for AI development. As Apple continues to push the boundaries of what's possible with AI, users can look forward to a future where virtual assistants are more responsive, understanding, and integrated into our daily lives than ever before.